Weekly on Tuesdays from 09:00-10:00 US PT, 12:00-13:00 US ET, 17:00-18:00 UTC

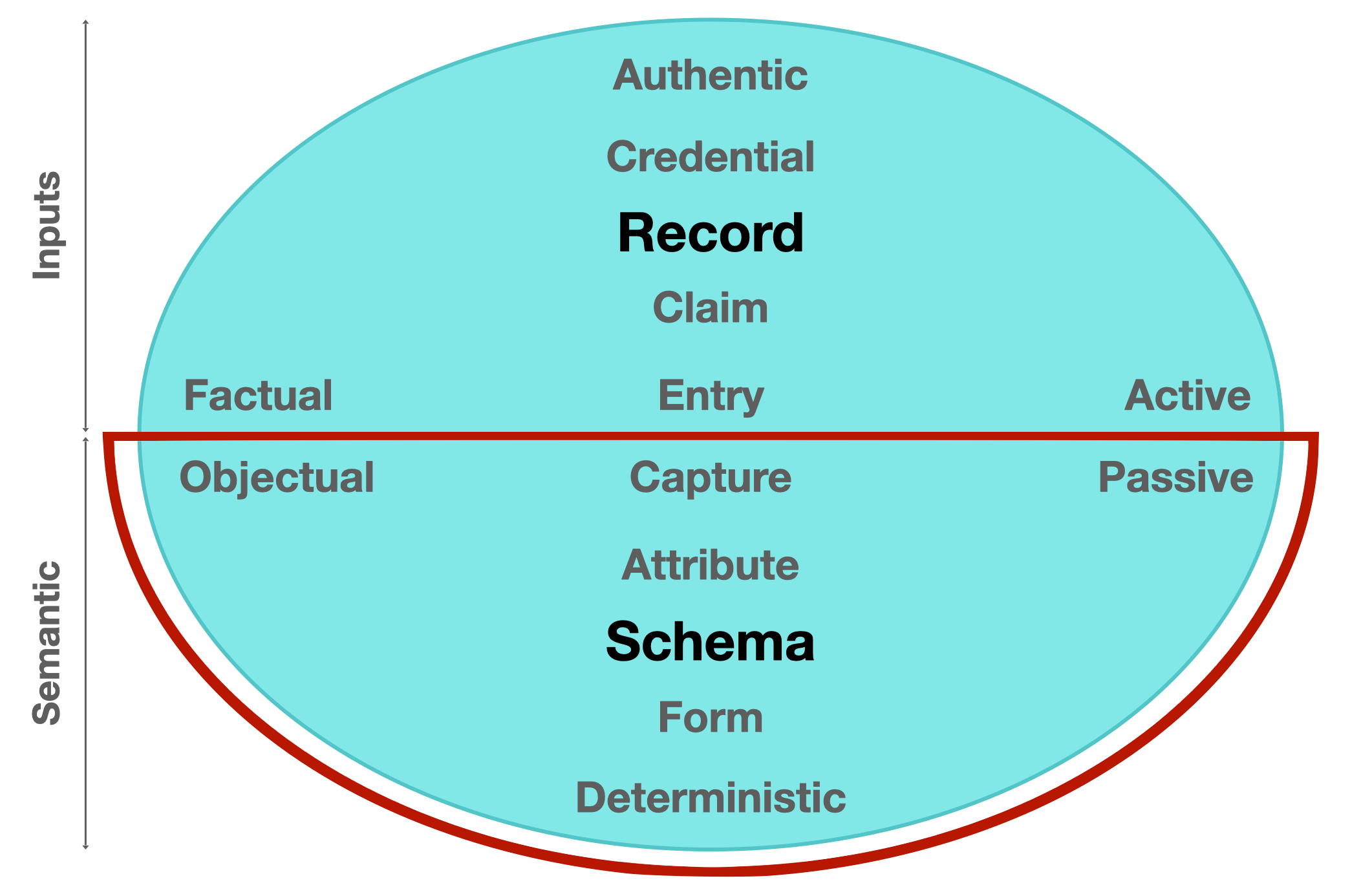

Data capture is defined as the process of collecting data electronically, allowing it to be stored, searched, or organized more efficiently. In a decentralized network, data capture requires the provision of deterministic fields in order to capture and store collected data. In the Model of Identifier States, all elements and characteristics of data capture are depicted in the southern hemispherical Semantic domain.

Semantic domain [passive] / the meaning and use of what is put in, taken in, or operated on by any process or system.

Figure 1. A component diagram highlighting the Semantic domain within a balanced network model.

The mission of the Semantics group (ISWG-S) is to define a data capture architecture consisting of stable schema bases and interoperable overlays for Internet-scale deployment. The scope of this sub-group is to define specifications and best practices that bring cohesion to data capture processes and other Semantic standards throughout the ToIP stack, whether these standards are hosted at the Linux Foundation or external to it. Other sub-group activities will include creating template Requests for Proposal (RFPs) and additional guidance to utility and service providers regarding implementations in this domain. The sub-group may also organize Task Forces and Focus Groups to escalate the development of certain components if deemed appropriate by the majority of the sub-group members and in line with the overall mission of the ToIP Foundation.

The post-millennial generation has witnessed an explosion of captured data points which has sparked profound possibilities in both Artificial Intelligence (AI) and Internet of Things (IoT) solutions. This has spawned the collective realization that society’s current technological infrastructure is simply not equipped to fully support de-identification or to entice corporations to break down internal data silos, streamline data harmonization processes and ultimately resolve worldwide data duplication and storage resource issues.

Developing and deploying the right data capture architecture will improve the quality of externally pooled data for future AI and IoT solutions.

(Presentation and live demo / Tools tutorial / .CSV parsing tutorial)

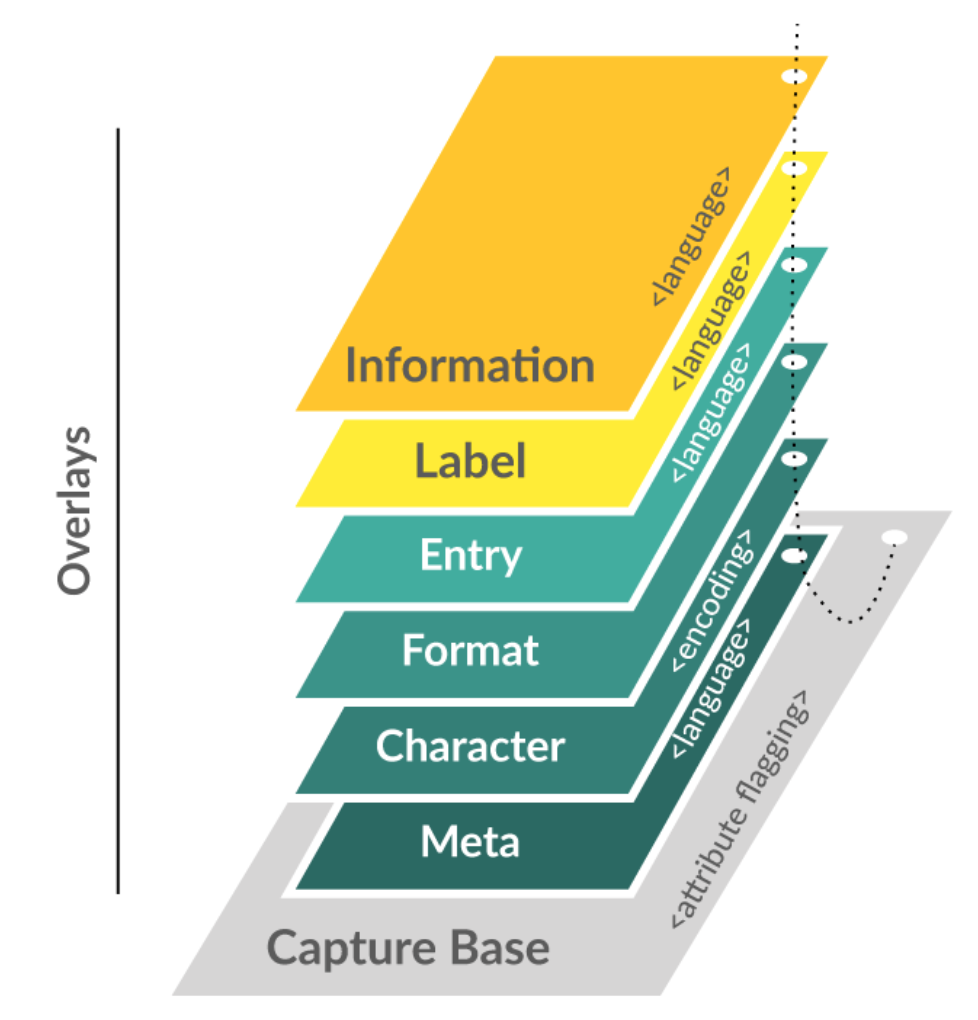

OCA is an architecture that presents a schema as a multi-dimensional object consisting of a stable schema base and interoperable overlays. Overlays are task-oriented linked data objects that provide additional extensions, coloration, and functionality to the schema base. This degree of object separation enables issuers to make custom edits to the overlays rather than to the schema base itself. In other words, multiple parties can interact with and contribute to the schema structure without having to change the schema base definition. With schema base definitions remaining stable and in their purest form, a common base object is maintained throughout the capture process which enables data standardization.

OCA harmonizes data semantics. It is a global solution to semantic harmonization between data models and data representation formats. As a standardized global solution for data capture, OCA facilitates data language unification, promising to significantly enhance the ability to pool data more effectively for improved data science, statistics, analytics, and other meaningful services.

OCA resources: